What is Kong API Gateway?

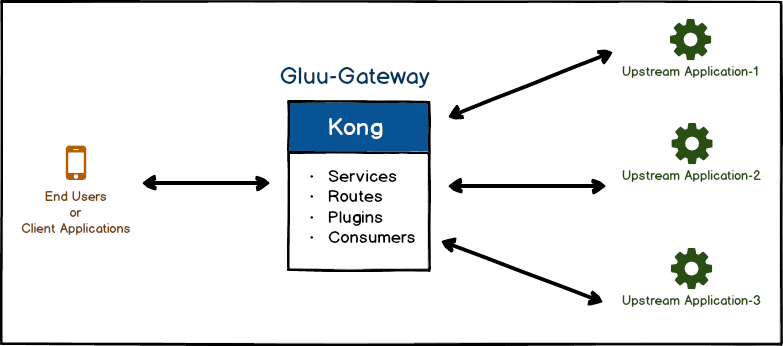

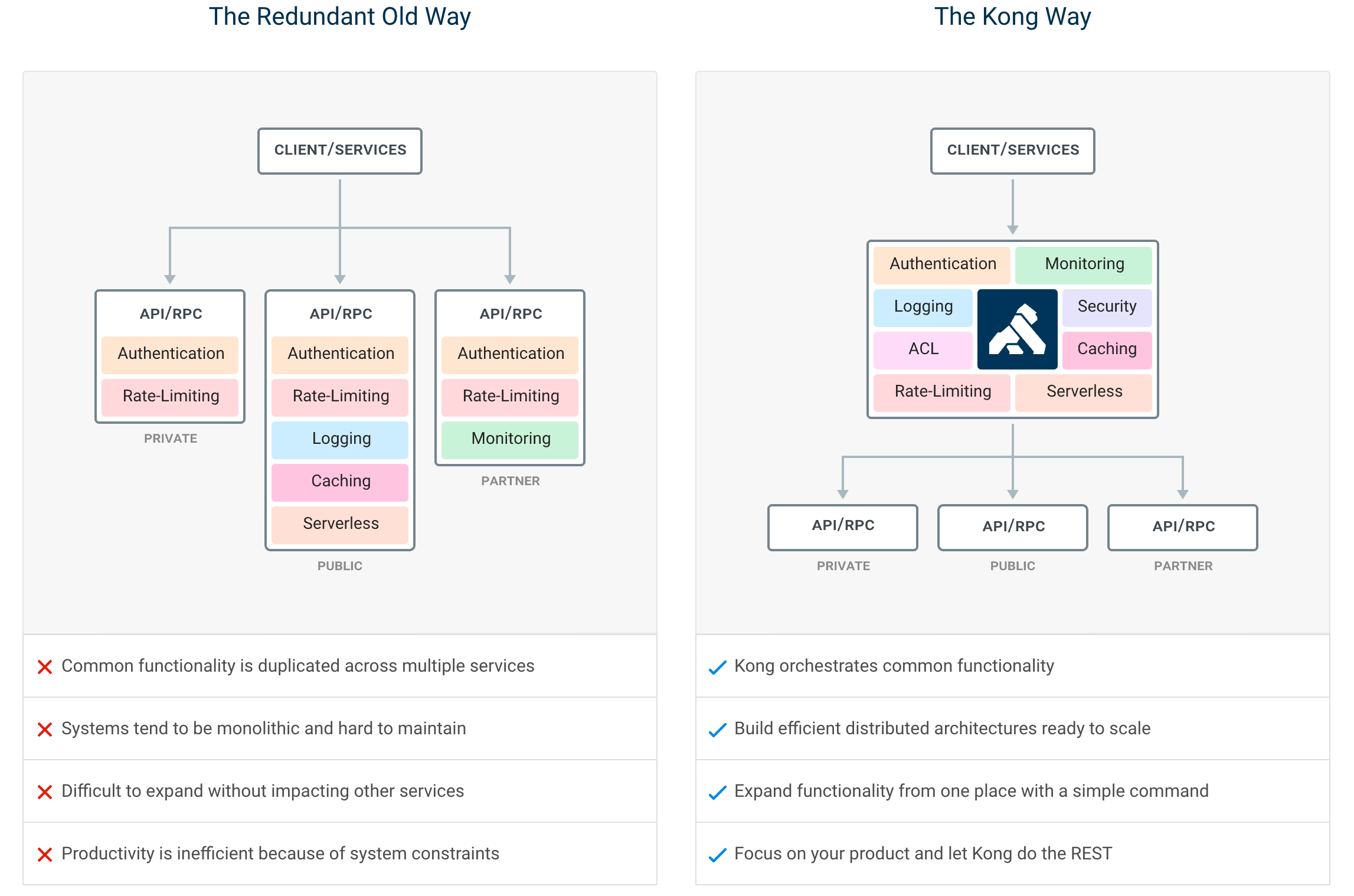

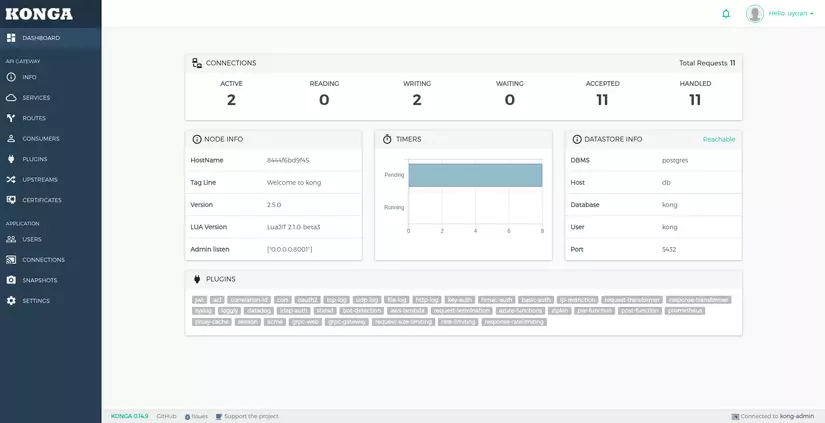

Kong is Orchestration Microservice API Gateway. Kong provides a flexible abstraction layer that securely manages communication between clients and microservices via API. Also known as an API Gateway, API middleware or in some cases Service Mesh. It is available as open-source project in 2015, its core values are high performance and extensibility. The database could be either Cassandra or Postgres. From version 1.1 it has become possible to run kong without a database

Kong Server is a lua application built on top of NGINX and acts like a API front-end. The server forwards the traffic between consumers and the API’s, the flexible Kong’s plugin architecture make easy to add more functionality such as a rate limiting, authentication,request/response transformation, and more.

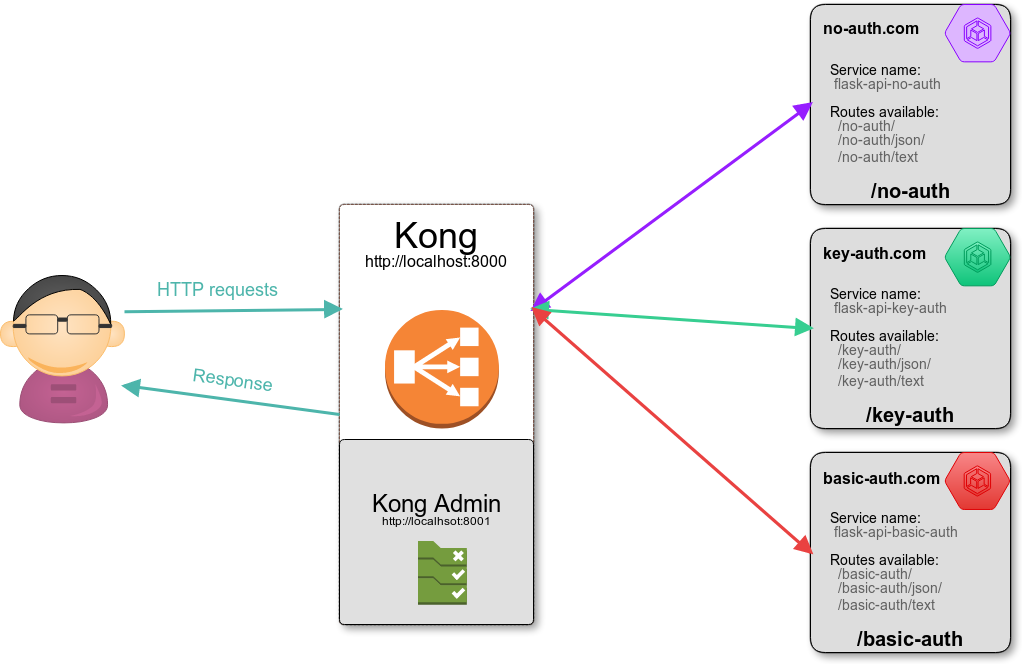

Kong API Gateway exposes two endpoints:

- a proxy endpoint for forwarding HTTP(port=8000) and HTTPS(port=8443) traffics

- a admin api endpoint to manage and configure the server. It uses port 8001 and 8444 for handling HTTPS requests

Kong API Gateway Key Concepts

Upstream

An Upstream object refers to your upstream API/service sitting behind Kong, to which client requests are forwarded. An Upstream object represents a virtual hostname and can be used to load balance incoming requests over multiple services (targets). For example, an Upstream named service.v1.xyz for a Service object whose host is service.v1.xyz. Requests for this Service object would be proxied to the targets defined within the upstream.

Client

A Kong Client refers to the downstream client making requests to Kong’s proxy port. It could be another service in a distributed application, a user’s identity, a user’s browser, or a specific device.

Consumer

A Consumer object represents a client of a Service.

A Consumer is also the Admin API entity representing a developer or machine using the API. When using Kong, a Consumer only communicates with Kong which proxies every call to the said upstream API.

Host

A Host represents the domain hosts (using DNS) intended to receive upstream traffic. In Kong, it is a list of domain names that match a Route object.

Plugin

Plugins provide advanced functionality and extend the use of Kong Enterprise, allowing you to add new features to your gateway. Plugins can be configured to run in a variety of contexts, ranging from a specific route to all upstreams. Plugins can perform operations in your environment, such as authentication, rate-limiting, or transformations on a proxied request.

Proxy

Kong is a reverse proxy that manages traffic between clients and hosts. As a gateway, Kong’s proxy functionality evaluates any incoming HTTP request against the Routes you have configured to find a matching one. If a given request matches the rules of a specific Route, Kong processes proxying the request. Because each Route is linked to a Service, Kong runs the plugins you have configured on your Route and its associated Service and then proxies the request upstream.

Proxy Caching

One of the key benefits of using a reverse proxy is the ability to cache frequently-accessed content. The benefit is that upstream services do not need to waste computation on repeated requests.

One of the ways Kong delivers performance is through Proxy Caching, using the Proxy Cache Advanced Plugin. This plugin supports performance efficiency by providing the ability to cache responses based on requests, response codes and content type.

Kong receives a response from a service and stores it in the cache within a specific timeframe.

For future requests within the timeframe, Kong responds from the cache instead of the service.

The cache timeout is configurable. Once the time expires, Kong forwards the request to the upstream again, caches the result, and then responds from the cache until the next timeout.

The plugin can store cached data in-memory. The tradeoff is that it competes for memory with other processes, so for improved performance, use Redis for caching.

Rate Limiting

Rate Limiting allows you to restrict how many requests your upstream services receive from your API consumers, or how often each user can call the API. Rate limiting protects the APIs from inadvertent or malicious overuse. Without rate limiting, each user may request as often as they like, which can lead to spikes of requests that starve other consumers. After rate limiting is enabled, API calls are limited to a fixed number of requests per second.

In this workflow, we are going to enable the Rate Limiting Advanced Plugin. This plugin provides support for the sliding window algorithm to prevent the API from being overloaded near the window boundaries and adds Redis support for greater performance.

Role

A Role is a set of permissions that may be reused and assigned to Admins. For example, this diagram shows multiple admins assigned to a single shared role that defines permissions for a set of objects in a workspace.

Route

A Route, also referred to as Route object, defines rules to match client requests to upstream services. Each Route is associated with a Service, and a Service may have multiple Routes associated with it. Routes are entry-points in Kong and define rules to match client requests. Once a Route is matched, Kong proxies the request to its associated Service.

Service

A Service, also referred to as a Service object, is the upstream APIs and microservices Kong manages. Examples of Services include a data transformation microservice, a billing API, and so on. The main attribute of a Service is its URL (where Kong should proxy traffic to), which can be set as a single string or by specifying its protocol, host, port and path individually. The URL can be composed by specifying a single string or by specifying its protocol, host, port, and path individually.

Before you can start making requests against a Service, you need to add a Route to it. Routes specify how (and if) requests are sent to their Services after they reach Kong. A single Service can have many Routes. After configuring the Service and the Route, you’ll be able to make requests through Kong using them.

Super Admin

A Super Admin, or any Role with read and write access to the /admins and /rbac endpoints, creates new Roles and customize Permissions. A Super Admin can:

- Invite and disable other Admin accounts

- Assign and revoke Roles to Admins

- Create new Roles with custom Permissions

- Create new Workspaces

Tags

Tags are customer defined labels that let you manage, search for, and filter core entities using the ?tags querystring parameter. Each tag must be composed of one or more alphanumeric characters, \_\, -, . or ~. Most core entities can be tagged via their tags attribute, upon creation or edition.

Teams

Teams organize developers into working groups, implements policies across entire environments, and onboards new users while ensuring compliance. Role-Based Access Control (RBAC) and Workspaces allow users to assign administrative privileges and grant or limit access privileges to individual users and consumers, entire teams, and environments across the Kong platform.

Workspaces

Workspaces enable an organization to segment objects and admins into namespaces. The segmentation allows teams of admins sharing the same Kong cluster to adopt roles for interacting with specific objects. For example, one team (Team A) may be responsible for managing a particular service, whereas another team (Team B) may be responsible for managing another service.

Many organizations have strict security requirements. For example, organizations need the ability to segregate the duties of an administrator to ensure that a mistake or malicious act by one administrator does not cause an outage.

Comments are closed.